Security teams use tools to organize. Dashboards glow, warnings arrive on time, and compliance views turn red to green quickly. Visual clarity helps soothe leadership and convey risk control. However, a quiet dashboard does not guarantee safety. It merely indicates the tool found no issue. Attackers aren’t concerned about security stack polish. They seek weak seams, misconfigurations, neglected assets, and predictable human behavior.

That is also why tools can be most useful when they support deeper security work. Automated pentest reporting can help teams write up their findings faster, make them more consistent, and offer stakeholders a better idea of what needs to be fixed. The real value of it grows when teams see it as the start of an inquiry, validation, and follow-up instead of the end of the story. Security tools can make a program much stronger, but they function best when people use their judgment and are prepared to query what might still be missing.

When Metrics Distort Reality

When security measures influence perception rather than reality, a problem arises. Executives assume risk has decreased if patch compliance grows. If vulnerability counts reduce, teams may believe exposure has decreased. Though useful, those signals are incomplete. Exception lists, unmanaged systems, antiquated programs, and poorly inspected production assets are major vulnerabilities.

These issues are not tool-related. Using a quantifiable outcome as truth is the problem. Teams may prioritize easy-to-count items over important ones. Narrow visibility, insufficient asset coverage, or tuning decisions that reduce noise without increasing danger may result in a clean report. When organizations conflate reporting efficiency with security maturity, blind spots form.

Skills Erode Under Automation

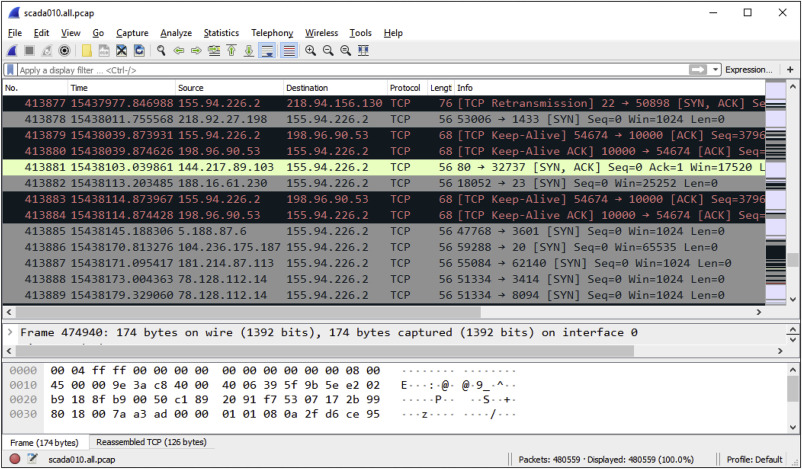

Another consequence is the slower erosion of human judgment. When teams lean too heavily on scanners, automated remediation, and prebuilt workflows, they stop exercising the instincts that matter during real incidents. Packet analysis feels unnecessary until traffic is misclassified. Threat modeling seems optional until a business workflow creates an unexpected escalation path. Over time, fewer people practice asking basic but essential questions about what looks normal and what does not.

This affects engineering teams as well. If developers only correct what a tool flags, they may stop learning why the weakness exists in the first place. The result is a workforce that can respond to prompts but struggles to reason through new or unusual conditions. Attackers do not need to bypass every tool forever. They need only one moment when the tool misses something, and the humans behind it cannot adapt.

A Healthier Way to Use Tools

Strong security programs do not reject tools. They refuse to treat them as unquestionable authorities. Useful programs build in friction where it matters. They validate assumptions manually, review logs for sanity rather than only for compliance, and test business processes as well as technical controls. They also keep inventories current, because forgotten systems often create the easiest openings.

Leadership matters too. Clean dashboards should prompt sharper questioning, not confidence. Not logged, scanned, or validated? Was anything waived, omitted, or delayed? Tool sprawl is particularly concerning because every platform handoff introduces another attack vector. Often, fewer tools with clear ownership and careful tweaking give better protection than a vast stack. Human curiosity, technical judgment, and regular validation keep security programs honest, although automation helps.